The Dawn of a New Uncertainty

We stand at the threshold of a technological revolution unlike any before. Artificial intelligence now permeates every corner of modern life composing documents, analyzing markets, diagnosing illnesses, and increasingly, shaping decisions once reserved for human judgment. The promise is extraordinary: enhanced productivity, breakthrough innovations, and solutions to challenges once deemed intractable. Yet this promise arrives wrapped in uncertainty that no single leader, institution, or nation can fully anticipate or control.

The critical insight for today’s leaders is stark: we cannot legislate uncertainty away. We can only navigate through it with wisdom, foresight, and moral clarity.

Understanding the Unique Nature of AI Risk

Artificial intelligence represents a departure from traditional technological innovations. Unlike previous industrial revolutions where machines replaced physical labor with predictable outcomes, AI systems operate in fundamentally different ways that create novel categories of risk.

Machine learning algorithms evolve through training, self-correcting beyond human visibility. Generative models produce synthetic content so realistic that distinguishing truth from fabrication becomes increasingly challenging. Deep neural networks make autonomous decisions through pathways so complex that even their creators cannot fully explain their reasoning processes.

These characteristics are not temporary bugs awaiting fixes, they represent intrinsic features of learning systems. Recent research shows that sixty-five percent of organizations now regularly use generative AI, nearly doubling in adoption within just ten months, underscoring how rapidly this technology has integrated into business operations worldwide.

To avoid AI risk entirely would mean abandoning AI altogether, an impossibility for modern enterprises, governments, educational institutions, and societies. The challenge, therefore, shifts from risk avoidance to risk literacy. The question evolves from “how do we stop this?” to “how do we harness this responsibly, ethically, and wisely?”

The Paradox of Perfect Safety

Across diverse cultures and governance models, there exists a natural human preference for structure over uncertainty. Some societies have built their competitive advantages on efficiency and regulatory compliance. Yet history consistently demonstrates that innovation punished for early failure eventually migrates to more welcoming environments. The world’s most significant AI breakthroughs have emerged from ecosystems that embrace calculated experimentation rather than demanding perfect control.

This creates a fundamental paradox for leaders: excessive caution breeds stagnation, while balanced risk-taking breeds innovation. The path forward requires threading the needle between reckless abandon and paralytic over-regulation.

Defining Wisdom in the Age of AI

True wisdom does not reject risk; it transforms risk into purposeful action. What distinguishes wise risk-taking in AI development is the integration of bold technological advancement with unwavering ethical commitment, transparency, and accountability, thus ensuring that progress serves humanity rather than concentrating power.

Psychologists who study decision-making under uncertainty emphasize the concept of “knowing what you don’t know.” Applied to AI, this intellectual humility translates into several practical disciplines:

- Acknowledging the inherent limitations of datasets and models

- Actively interrogating algorithmic bias and fairness

- Anticipating downstream consequences, economic disruption, moral implications, and social impacts

- Maintaining human oversight at critical decision points

The most courageous leaders today are those willing to say, “I don’t have complete certainty, but I will pursue answers responsibly.”

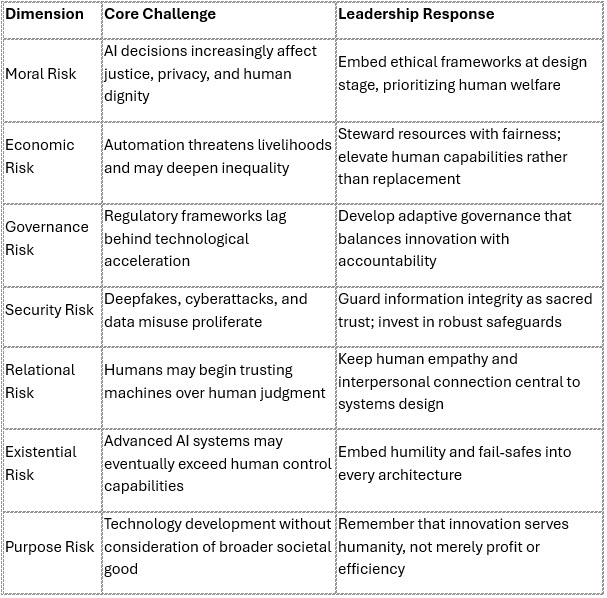

Seven Critical Dimensions of AI Risk Leadership

The Courage to Innovate with Conscience

Innovation without moral grounding breeds danger. Conscience without courage to act breeds paralysis. Exceptional leadership requires holding both in dynamic tension.

The future does not need AI optimists or AI skeptics, it needs AI realists with moral conviction. These are leaders who build ethical frameworks alongside profitable systems, who dare to test novel models while refusing to compromise foundational values, who push boundaries while respecting limits.

This integration of boldness and values represents not merely aspirational rhetoric but practical strategy. Organizations that anchor their AI development in clear ethical principles build more sustainable innovations and earn greater stakeholder trust over time.

Learning from Global Approaches

Different regions have adopted distinct philosophies toward AI governance, each offering valuable lessons:

United States: Champions “permissionless innovation,” enabling rapid experimentation that drives frontier research forward. This approach has accelerated breakthrough development but occasionally at the cost of adequate safeguards.

European Union: The EU AI Act, which entered into force in August 2024, represents the world’s first comprehensive legal framework on artificial intelligence, prioritizing human rights, transparency, and accountability. This regulatory approach protects citizens while potentially slowing deployment speed.

China: Pursues aggressive, state-coordinated AI development at industrial scale, demonstrating how strategic national alignment can accelerate competitive positioning in global technology leadership.

Singapore: Invests substantially in trustworthy AI frameworks and regulatory sandboxes, attempting to balance innovation with governance, though challenges remain in cultivating true entrepreneurial risk culture.

These varied approaches reveal a fundamental truth: freedom without ethics risks chaos, while ethics without courage risks stagnation. Sustainable leadership integrates both dimensions.

A Practical Framework for Managing AI Uncertainty

Leaders facing AI-related decisions can apply a systematic approach to managing uncertainty:

1. Recognize Inevitability Accept that AI risk cannot be eliminated, only managed intelligently. Waiting for perfect safety guarantees means never beginning.

2. Establish Ethical Guardrails Implement transparent data governance, maintain human oversight for consequential decisions, and conduct regular bias audits across AI systems.

3. Cultivate Organizational Discernment Train teams in moral reasoning and ethical decision-making frameworks, not merely technical compliance procedures. Ethics cannot be outsourced to legal departments alone.

4. Demand Explainability Test AI models with explainability as a default requirement. Black-box systems making consequential decisions represent unacceptable risk in most applications.

5. Enable Controlled Experimentation Create safe environments for failure that reveal hidden flaws early, before deployment at scale. Structured failure is vastly preferable to unstructured crisis.

6. Align Profit with Purpose Ensure AI development uplifts human flourishing rather than exploiting vulnerabilities. Sustainable business models serve society’s interests alongside shareholders’ returns.

7. Lead with Intellectual Humility Acknowledge limitations openly, invite diverse perspectives proactively, and commit to continuous learning as technology evolves.

The Greater Danger: Strategic Inaction

Paradoxically, attempting to avoid all AI risk represents the most dangerous strategy of all. History will not remember those who hesitated from fear, it will remember those who acted with wisdom and moral courage.

Refusing engagement with AI because it feels unsafe resembles refusing to sail because the sea contains waves. Every technological revolution creates turbulence, wisdom learns to navigate skillfully rather than remaining anchored in harbor.

AI has arrived and continues evolving. It will draft contracts, evaluate performance, design products, and increasingly advise leadership on strategic decisions. The relevant question is not whether we should permit its use, but whether we will anchor AI development in ethical principles before the technology redefines us.

Toward Wisdom-Centered Leadership

We inhabit a defining moment, not a choice between progress and decline, but between wisdom and fear, between purposeful engagement and reactive avoidance. Artificial intelligence itself represents neither threat nor salvation; it is a powerful tool whose ultimate impact depends entirely on how humanity wields it.

The future belongs to leaders who approach AI not with arrogance but with appropriate awe, recognizing that knowledge grows truly powerful only when guided by wisdom, empathy, and commitment to human flourishing.

UNESCO’s Recommendation on the Ethics of Artificial Intelligence emphasizes that protection of human rights and dignity must remain the cornerstone of AI development, based on fundamental principles of transparency, fairness, and human oversight. This global framework, applicable across all UNESCO member states, provides essential guidance for leaders navigating these complex challenges.

Risk itself is not the enemy, ignorance and apathy are. The leaders who will shape tomorrow are those prepared to face AI risk directly, not recklessly, but with moral clarity and strategic wisdom. They will build systems that enhance human capability while respecting human dignity, that drive efficiency while preserving human connection, that pursue innovation while honoring timeless ethical principles.

The age of AI demands nothing less than leadership that integrates technological sophistication with moral wisdom, leaders who recognize that our most powerful innovations must serve our highest values.

References

- McKinsey & Company. (2024). The state of AI in early 2024: Gen AI adoption spikes and starts to generate value. Retrieved from https://www.mckinsey.com/capabilities/quantumblack/our-insights/the-state-of-ai-2024

- European Commission. (2024). AI Act. Retrieved from https://digital-strategy.ec.europa.eu/en/policies/regulatory-framework-ai

- European Union. (2024). Regulation (EU) 2024/1689 laying down harmonised rules on artificial intelligence. Retrieved from https://eur-lex.europa.eu/legal-content/EN/TXT/?uri=CELEX%3A32024R1689

- UNESCO. (2021). Recommendation on the Ethics of Artificial Intelligence. Retrieved from https://www.unesco.org/en/artificial-intelligence/recommendation-ethics

- McKinsey & Company. (2024). The state of AI: How organizations are rewiring to capture value. Retrieved from https://www.mckinsey.com/capabilities/quantumblack/our-insights/the-state-of-ai

DISCLAIMER

This article was created with the assistance of artificial intelligence technology. While every effort has been made to ensure accuracy and verify all referenced sources, readers should independently verify information for their specific use cases. The views and analysis presented are those of the author and do not constitute legal, financial, or professional advice. The author and publisher disclaim any liability for actions taken based on this content. References have been verified as of the publication date, but readers should confirm current information from original sources. This article is provided for educational and informational purposes only.

An accompanying images were generated using ChatGPT-5 artificial intelligence technology. These images are AI-generated illustrations created for visual enhancement purposes only and do not represent real photographs, actual events, or specific individuals. The AI-generated images are provided “as is” without any warranties regarding accuracy, completeness, or fitness for any particular purpose.

This article was written by Dr John Ho, a professor of management research at the World Certification Institute (WCI). He has more than 4 decades of experience in technology and business management and has authored 28 books. Prof Ho holds a doctorate degree in Business Administration from Fairfax University (USA), and an MBA from Brunel University (UK). He is a Fellow of the Association of Chartered Certified Accountants (ACCA) as well as the Chartered Institute of Management Accountants (CIMA, UK). He is also a World Certified Master Professional (WCMP) and a Fellow at the World Certification Institute (FWCI).

ABOUT WORLD CERTIFICATION INSTITUTE (WCI)

World Certification Institute (WCI) is a global certifying and accrediting body that grants credential awards to individuals as well as accredits courses of organizations.

During the late 90s, several business leaders and eminent professors in the developed economies gathered to discuss the impact of globalization on occupational competence. The ad-hoc group met in Vienna and discussed the need to establish a global organization to accredit the skills and experiences of the workforce, so that they can be globally recognized as being competent in a specified field. A Task Group was formed in October 1999 and comprised eminent professors from the United States, United Kingdom, Germany, France, Canada, Australia, Spain, Netherlands, Sweden, and Singapore.

World Certification Institute (WCI) was officially established at the start of the new millennium and was first registered in the United States in 2003. Today, its professional activities are coordinated through Authorized and Accredited Centers in America, Europe, Asia, Oceania and Africa.

For more information about the world body, please visit website at https://worldcertification.org.

World Certification Institute – WCI | Global Certification Body World Certification Institute (WCI) is a global certifying body that grants credential awards to individuals as well as accredits courses of organizations.

World Certification Institute – WCI | Global Certification Body World Certification Institute (WCI) is a global certifying body that grants credential awards to individuals as well as accredits courses of organizations.